Number of Account Created Per Domain and Number of Submitted Articles

I am having issues with the operation of SER regarding the number of accounts that it creates per domain and also the number of articles it sends per account.

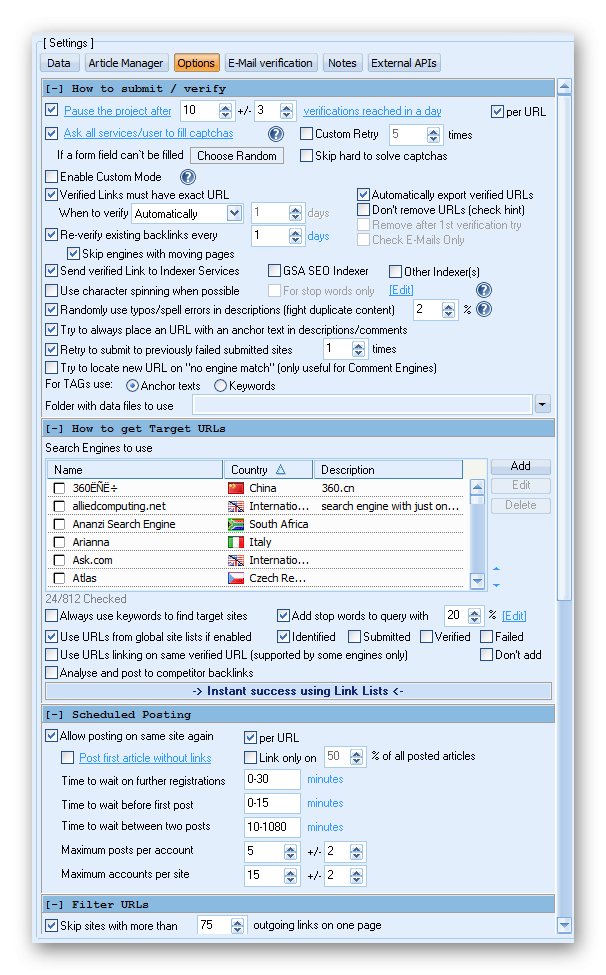

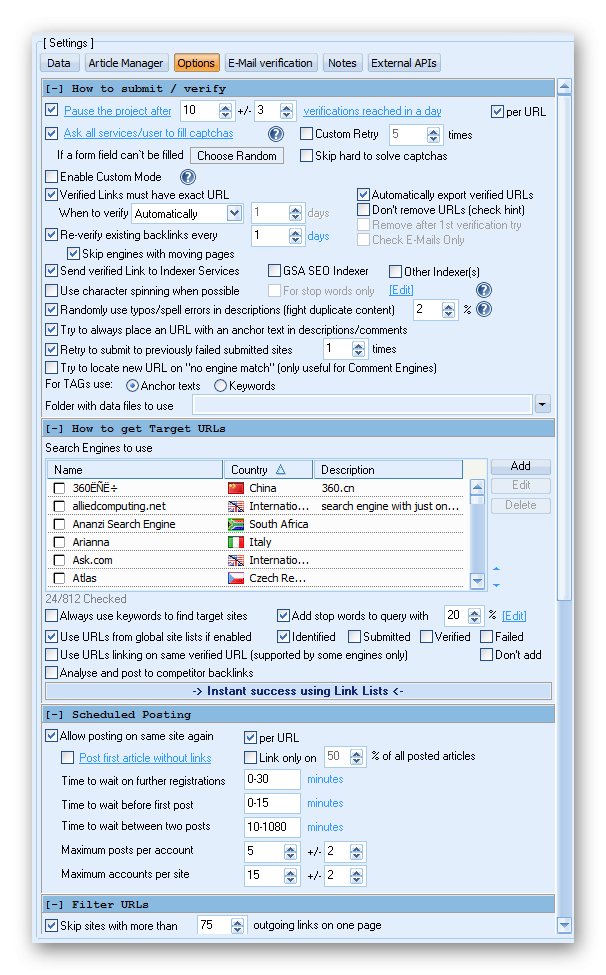

Below is my settings for posting:

Below is my settings for posting:

However, for one particular DotNetNuke (Social Network) engine, it has created well over 148 different accounts and it just keeps posting to a few domains several times a day.

Also, when I check the "Show remaining Target URLs I see a long list of urls it intends posting to. Looking at the numbers, as a webmaster, I believe it would be easy to detect these as spam and Google will definitely see it the same way considering the quantity.

At max posts of 7 posts per account and having say a total of 17 accounts, that should be a max of 120 submissions per domain.

What I don't understand in this scenario is the function of the "per URL". Does that imply 120 articles per URL. I need clarification as per how exactly these setting work.

Instead of searching and posting to newer domains, it keeps on posting virtually to the same domain all through the day.

Thanks.

Comments

It is a Tier1 Project as so I want its submission to be a little quieter.

My question specifically is this:

Is it going to multiply this way

[Number of Accounts x Number of Post x Number of Urls] per domain

or

If I turn off the "per URL", will it be like this:

[Number of Accounts x Number of Posts]

The other question would be, does it stop posting to that domain once it has reached the number in question or does it resume once it sees a new url added to the Global site Verified list?

Thanks.

PS: I thought you would be on your vacation!

Thanks for the answer. Then how does one avoid such engines because they just waste resources and do not allow SER to post to other engines on time because of the time spent on these engines.

I think there should be a way to restrict or tell SER not to post to specific domains at different Tier levels. If it did this at Tier 3 or above, I won't bother much but at Tier1, I think that is a big problem. Not going to my Money Site though but hitting my Buffer like that is just not good with Google.

Thanks.

The is basically the fact about using any tool especially a tool as delicate and powerful as GSA SER. It has both the power to make you and break you before you know.

Special attention as to be paid to understand the intricacies involved in using this monster of a tool. That's probably why I'm being so careful as I don't want my site to get hurt - at least for now!

Thanks for the hints and best of luck pal.

You can set a custom verification time to say do verifications every 10 min

Hope that helps

I think these are very good options to explore and see how SER responds. Thanks again!

Another solutions and probably the best in terms of not burning you main site url and not having to worry about over running the set verification per day, would be to diversify the urls that you use.

Do NOT use only URLs from one domain, that is super bad. You should be diversifying your URLs, meaning use as many urls as possible from different domains and platforms.

For example: money site URLs, the money site FB page url, Twitter page url, YT channel, YT playlist, YT Video, Linked in company page and any other high authority links from either web 2.0 or social sites that link to your main site. The more diversity the better...

The urls were my Buffer backlinks but I was worried about the huge amounts that were being posted to just one domain - but not mine.