GSA Proxy Scraper questions

@sven I just bought the software and also went through the video tutorial.

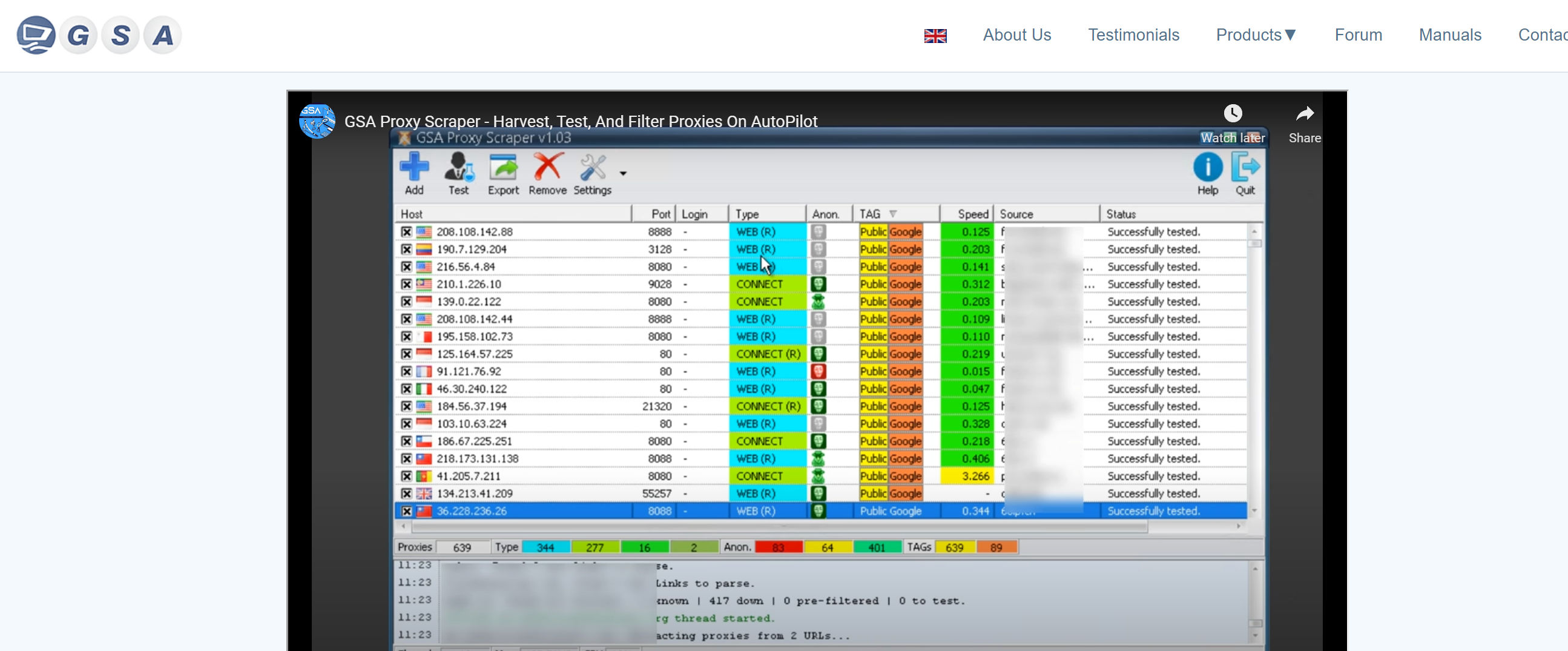

In the video tutorial on your product page, there is a "google“ tag in orange colour to extract Google passed proxies (i assume this means proxies that can scrape Google search engine's results)

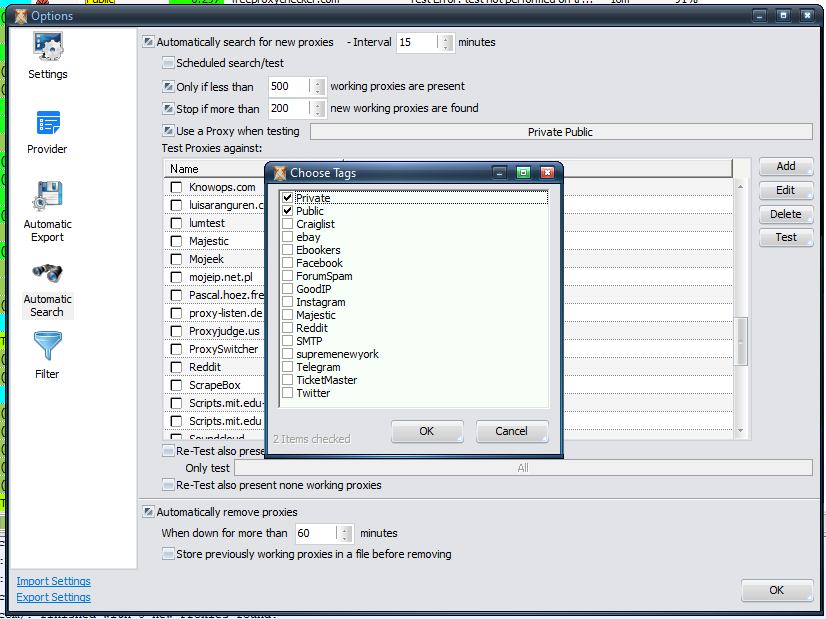

my software interface:

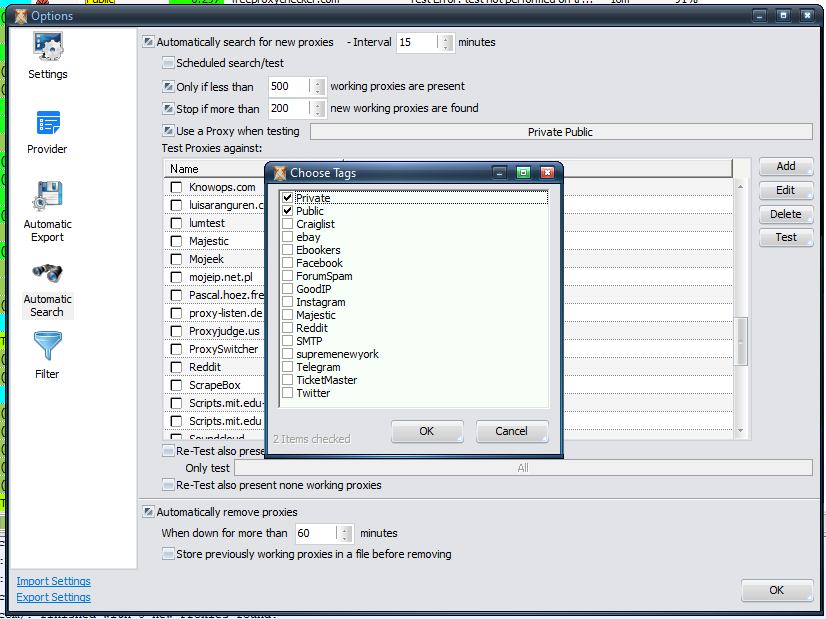

this is my setting for GSA proxy scraper

What I would like to do is:

1. Keep GSA PS running on autopilot to feed Scrapebox to scrape lists for GSA SER

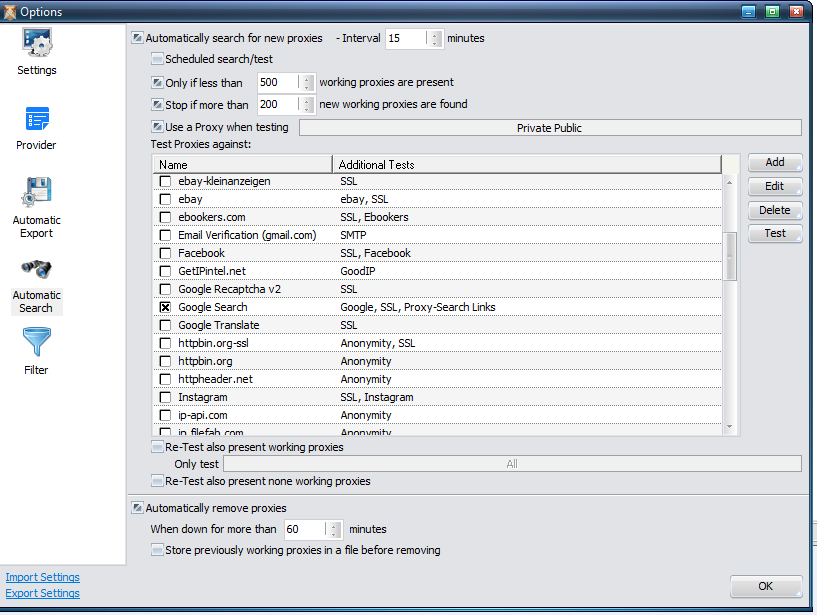

2. Based on the screenshot above, I am getting GSAPS to run every 15mins:

- Run only if there are less than 500 working proxies against Google search engine

-If runned, then stop when there is more than 200 new working poxies

"Use a proxy when testing", for this, what should I select? All?

And since this is on autopilot, for the testing "test proxies against", I selected "Google search"

And for the check box, "re-test also present working proxies", I should be selecting "google tag" (as per your video tutorial) but there is no such option and I cannot find the interface to add this option.

----------------

I would like to scrape proxies non-stop and feed to scrapebox for this (autopilot), can you (or anyone kindly assist)? Quite confused by the interface.

In the video tutorial on your product page, there is a "google“ tag in orange colour to extract Google passed proxies (i assume this means proxies that can scrape Google search engine's results)

my software interface:

this is my setting for GSA proxy scraper

What I would like to do is:

1. Keep GSA PS running on autopilot to feed Scrapebox to scrape lists for GSA SER

2. Based on the screenshot above, I am getting GSAPS to run every 15mins:

- Run only if there are less than 500 working proxies against Google search engine

-If runned, then stop when there is more than 200 new working poxies

"Use a proxy when testing", for this, what should I select? All?

And since this is on autopilot, for the testing "test proxies against", I selected "Google search"

And for the check box, "re-test also present working proxies", I should be selecting "google tag" (as per your video tutorial) but there is no such option and I cannot find the interface to add this option.

----------------

I would like to scrape proxies non-stop and feed to scrapebox for this (autopilot), can you (or anyone kindly assist)? Quite confused by the interface.

Comments

Btw instead of exporting manually, how can I get gsaPS to auto feed the latest proxies to scrapebox?

Appreciate ur help via PM. I was thinking maybe I share my experience here so that anyone else looking for the same information will find this useful.

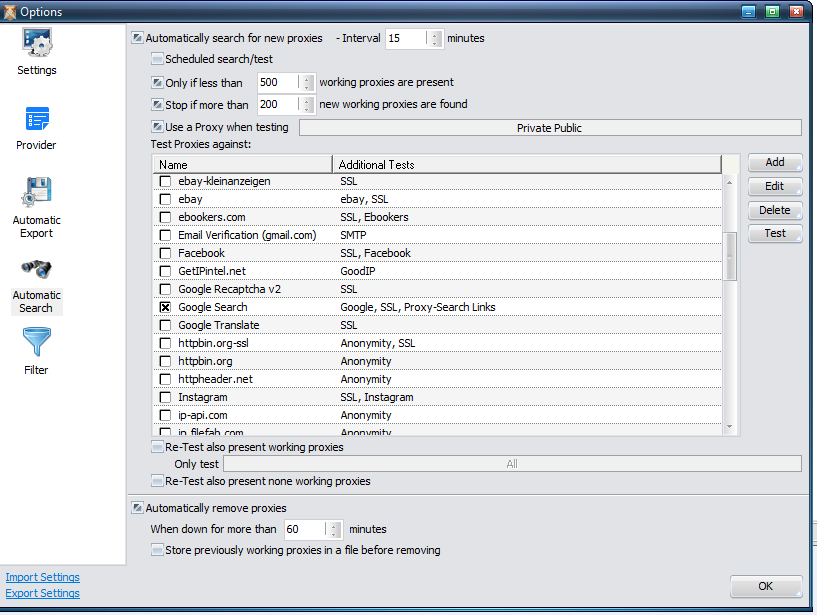

This is the setting UI, yet to do anything about this.

Provider setting:

I checked all but as per the prompt by GSA PS, some of the lists are no longer updated for a while so it is probably advisable to skip those when running GSA PS

For "Automatic export", I set this to 15 mins

For "Automatic Search", I set this to run every 5 minutes.

As I only want proxies for "Google scrapping", under "Test Proxies against“, I selected "Anonymous" (this is not an option to ignore based on the system's prompt) and I guess the reason is to ensure the system IP running GSA PS is not leaked. The other option I selected is "Google Search"

I also set "Re-test also present working proxies" to "only test" Google as I want to ensure all present proxies are working against Google.

"Automatically remove proxies" when down for more than 11 minutes, since my interval to run this is 5mins, I set this to 11mins such that any proxy which is not working after 2 intervals (5minx 2) will be removed on the third try.

For the "Filter" setting, for "Accept only if tagged as" I set it to Google as I only want proxies to scrape Google.

@Sven Was wondering you can let me know if I'm doing anything wrong?

Thanks!