I have imported around 90 million unique URLs as new targets and they get deleted with these setting

ComputerEngineer

ComputerEngineer

I have crawled myself quite a few URLs and made them unique

I import them into a fresh project

After a while target URLs of the project get deleted

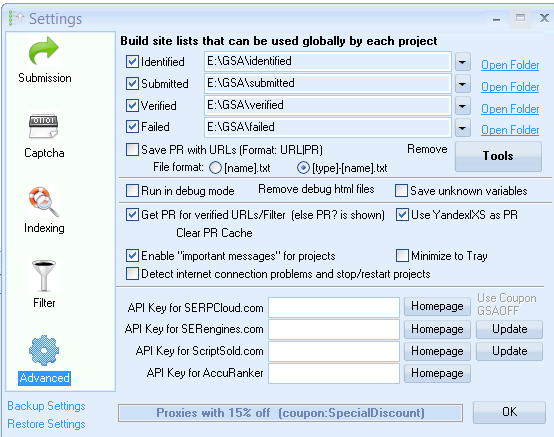

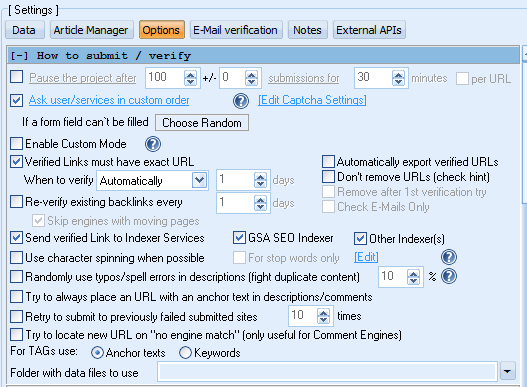

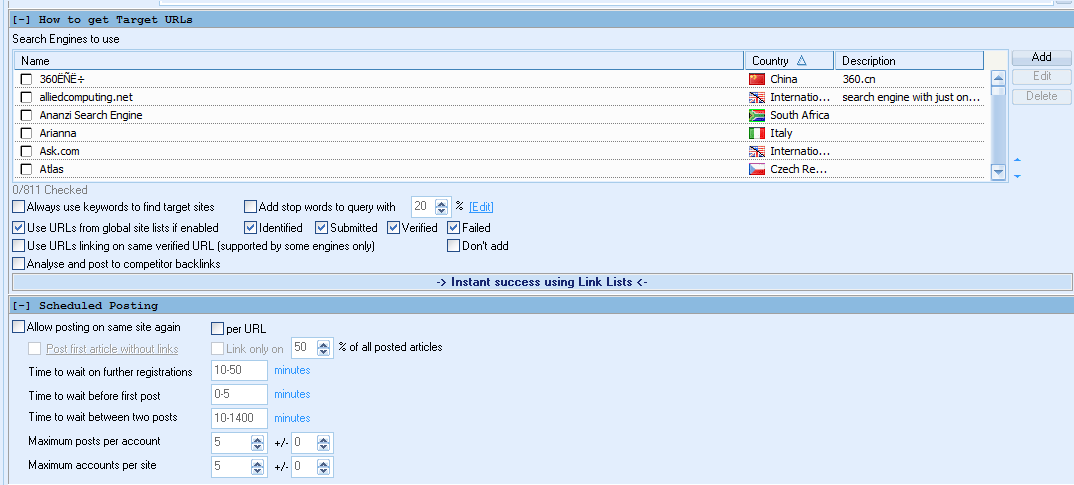

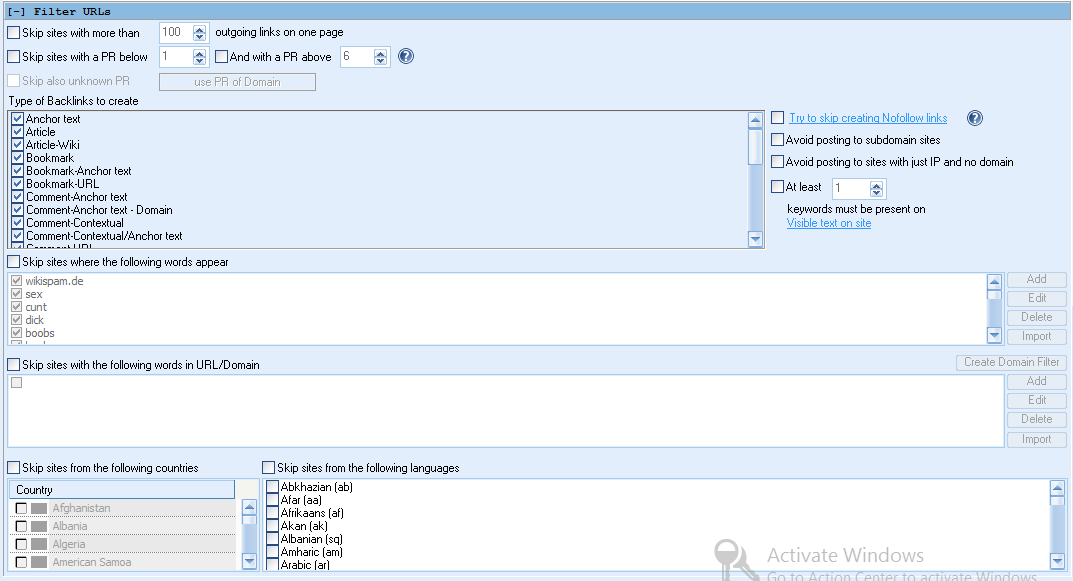

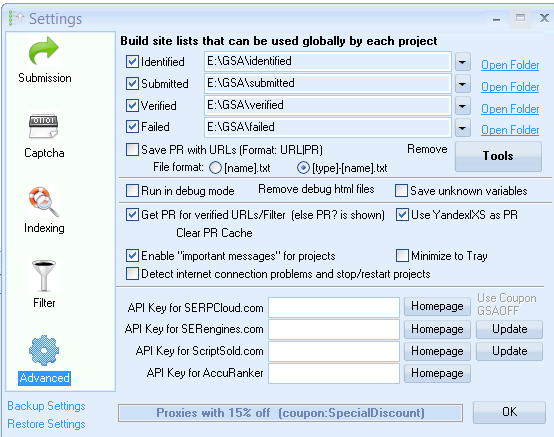

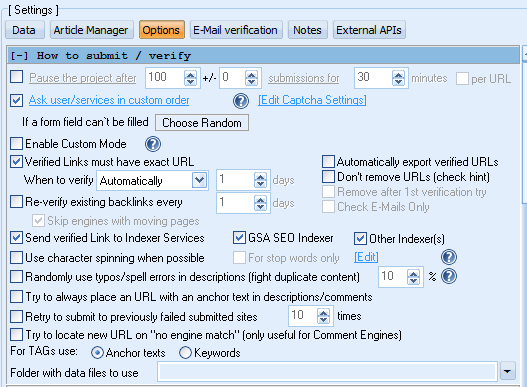

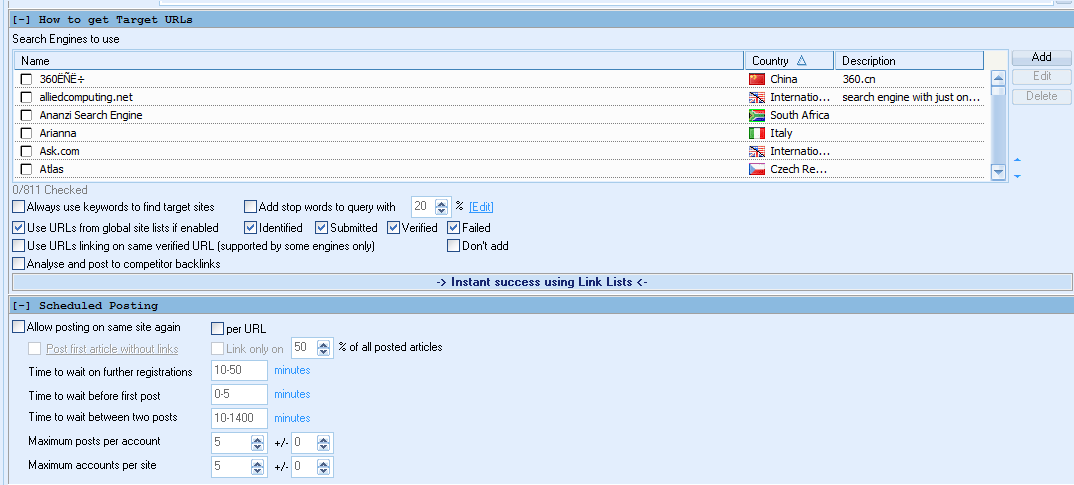

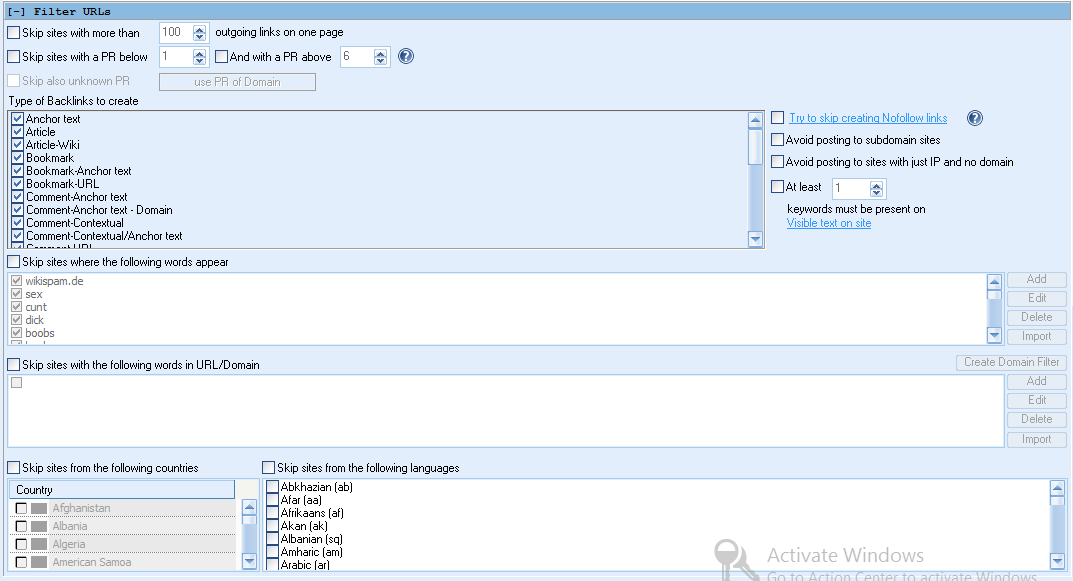

Here my settings

Are there a maximum amount of URLs file size that GSA ser supports?

Imported target urls file is 6.13 GB

The import process is being success because i see target urls file in projects folder

The GSA ser starts with around fetching 16k urls

And today when i wake up after like 10 hours i see target urls in project folder got reset and there are only several megabytes target urls

I am trying again

the fresh project starts as

Loaded 16777 URLs from imported sites

And damn target urls already got reset

I import them into a fresh project

After a while target URLs of the project get deleted

Here my settings

Are there a maximum amount of URLs file size that GSA ser supports?

Imported target urls file is 6.13 GB

The import process is being success because i see target urls file in projects folder

The GSA ser starts with around fetching 16k urls

And today when i wake up after like 10 hours i see target urls in project folder got reset and there are only several megabytes target urls

I am trying again

the fresh project starts as

Loaded 16777 URLs from imported sites

And damn target urls already got reset

Comments

It makes around 610 mb

lets see will it work this time

Ok now it shows avg of 10m urls left

this is different

now it is working

each piece is about 600mb

so there is certainly a limit application can handle

I have right clicked the project and selected import target urls from file option

Sure i will send you the file as private message

I had some 15-20 million identified and when i run the clean up as shown in figure

, number of identified went up to 50 million. All projects were stopped when running this, it took about 2 days for it to finish. I have seen this happen 2-3 times on different servers over past 2 years.

I have tried with 5 million links but dont know why the target urls gets zero within an hour . I am still confused, how can they be so quickly process and very less links built out it. Tried it many lists. I thought the problem was with me only but after seeing this thread, It looks others are facing same.

I tried to process list first with GSA PI and clean it and make it fine as much as I can . Even I trimmed the list size but the result is same for me.